Unmasking the Realities of Facial Recognition

(Animated illustration: CJ Ostrosky / POGO)

What is facial recognition?

Facial recognition is a method of using computer programs to identify individuals based on the features of their faces. Facial recognition systems create a unique “face print” (similar to a fingerprint) based on a pre-identified photo (or set of photos) for an individual. These systems can then rapidly scan an image of an unknown face against all the faceprints in their databases, and provide an identification if there is a match. These databases can contain millions of photos and programs can scan through them to identify a match in less than a second.

Facial recognition is also a looming privacy threat. If cameras were placed near sensitive locations such as houses of worship, political rallies, protests, or doctors’ offices, facial recognition could effortlessly catalog the intimate details of individuals’ lives.

Is this something coming in the future, or is it being used now?

It may sound more like dystopian science fiction than a real-world surveillance tool, but facial recognition is becoming increasingly prevalent. The most pervasive facial recognition surveillance exists in China. Its facial recognition dragnet located a BBC reporter wandering across a city of 3.5 million people in a mere seven minutes.

China’s powerful facial recognition surveillance network located a BBC reporter as part a news story on the systems. GIF created from footage from the BBC.

But law enforcement agencies across the United States are also rapidly adopting facial-recognition surveillance. Many local and state police forces use facial recognition systems, although details of their use are often shrouded in secrecy. The largest facial recognition surveillance system in the United States is operated by the FBI. The FBI’s Next Generation ID system maintains a facial recognition database with photos of more than 117 million Americans. The FBI conducts on average 4,055 searches per month to identify individuals with its facial recognition systems. Its database is composed primarily of driver’s-license photo databases cities and states provided to the FBI in exchange for use of the Bureau’s facial recognition surveillance system. According to a report by the Georgetown Center on Privacy and Technology, at least one in four of the nation’s thousands of state and local police departments have the ability to run facial recognition searches using the FBI’s or other systems. However, while facial recognition surveillance is being hastily deployed, oversight rules and basic limits on its use are lagging behind.

Wait, my driver’s license photo is in an FBI database?

Yes, if you’re from one of over a dozen states that shares this data with the FBI. And those governments did not ask for consent or even give notice to individuals that photos being taken of them for driver’s licenses are being used in an FBI surveillance system. These states also made no effort to solicit public opinion or obtain approval for their actions. In fact, until a report by the Georgetown Center on Privacy and Technology revealed the formal arrangements between states and the FBI, the fact that this data was repurposed for surveillance was hidden from the public. This became a point of outrage for many Members of Congress during a hearing on facial recognition surveillance in 2017.

So if facial recognition is already happening, is it accurate?

It may sound more like dystopian science fiction than a real-world surveillance tool, but facial recognition is becoming increasingly prevalent.

Facial recognition accuracy can vary significantly based on a wide range of factors, such as camera quality, light, distance, database size, algorithm, and the subject’s race and gender (more on those last key factors in a second). Highly advanced systems can generally have false positive error rates (i.e., the system erroneously declares a match) below 10 percent. While a 90 percent accuracy rate may sound good in general, it is an unacceptable risk when the end result is the possible arrest of or even use of force (including deadly force) against an innocent person. That’s why many experts say facial recognition should stay out of law enforcement’s hands until it becomes more accurate.

An ACLU study of Amazon’s facial recognition system used photos of every Members of Congress and scanned for matches against a mugshot database. Twenty-eight Members were erroneously identified as as matching someone in the database. Copyright 2018 American Civil Liberties Union.

Originally posted by the ACLU here.

Numerous studies—including those by MIT, an FBI technology expert, and the ACLU—have also found that facial recognition is significantly less accurate when identifying people of color and women. So long as these higher misidentification rates continue, facial-recognition surveillance will constitute not just a threat to the liberty and life of innocent people, but also a serious civil rights concern because it could create de-facto algorithm-based racial profiling. Given the higher risks of inaccuracy with these populations, allowing facial recognition to serve as the basis for stop-and-frisk searches or arrests could lead to broad and unjustified law enforcement actions against these groups.

“Real-time” facial recognition—in which all faces in a live video feed are scanned and run against a watch list—is especially inaccurate. A study of real-time facial recognition conducted by law enforcement in Wales earlier this year found that false positives occurred over ten times as often as accurate identifications. Real-time recognition misidentification issues are so serious that Axon, the top-producer of body camera in the United States, recently backed away from its long-running plan to build facial recognition software into its devices.

Numerous studies have found that facial recognition is significantly less accurate when identifying people of color and women.

Finally, the federal government is failing to do basic due-diligence to test and audit its facial recognition systems. A Government Accountability Office (GAO) report from last year concluded that the FBI was ignoring prior GAO recommendations and not conducting sufficient testing and auditing of its facial recognition system to check its accuracy and compliance with internal rules. These failures risk harming innocent individuals by misidentifying them, prevent proper oversight of law enforcement activities, and violated Privacy Act requirements to notify the public of changes to records systems.

What other issues does facial recognition raise?

In addition to the risk of police misidentifying and acting against innocent people, facial recognition surveillance could allow the government to easily stockpile highly sensitive information about our lives. With an automated system that can identify everyone simply by their face, the government could use facial recognition to effectively end anonymity in our public lives. Facial recognition surveillance could rapidly identify thousands of people at a protest or political rally. It could catalog everyone entering or exiting a house of worship or medical facility. It could track if suspected whistleblowers are entering a media facility or meeting with a journalist. Participation in constitutionally protected activities like these would be chilled as people become fearful of the government’s potential use of facial recognition to target them for investigation, prosecution, or harassment.

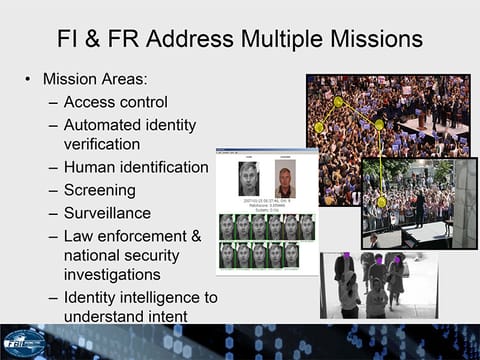

An FBI presentation previewing its facial recognition systems highlighted their ability to identify individuals at political events. (via Electronic Frontier Foundation).

But do we have privacy rights in public places?

Yes. The Fourth Amendment has always sought to properly limit the government’s power to watch its citizen. In early 2018, in fact, the United States Supreme Court explicitly ruled that the Fourth Amendment protects against surveillance of an individual’s activities in public when the government’s ability to conduct mass surveillance becomes too great. In Carpenter v. United States, the Court held that tracking a person’s location via their cell phone required a warrant because the government’s ability to easily stockpile sensitive information was simply too powerful to go on unchecked, even in the context of monitoring movement in public places. Chief Justice John Roberts emphasized that “With just the click of a button, the Government can access… location information at practically no expense.” The same is increasingly true for facial recognition surveillance.

Does the government limit facial recognition surveillance?

Currently, not much. Congress has not placed any limits on facial recognition surveillance, and most states haven’t either. Oregon is the only state with a law limiting the practice—it prohibits facial recognition from being used in combination with police body cameras. Legislation has been introduced in Maryland to provide general limits on facial recognition surveillance, and a Rhode Island bill would prohibit police from using facial recognition in combination with drones. However, neither of these bills have advanced in their legislatures.

What should we do about facial recognition surveillance?

It’s a complex topic that requires much more discussion by lawmakers, but it’s clear that there’s a need for common-sense limitations on the technology. To aid these conversations, the chairs of the Constitution Project Committee on Policing Reforms last year laid out some core policies to consider. These include requiring judicial authorization like a warrant for police to use facial recognition and limiting the technology’s use to investigating serious crimes, such as homicide. We’ve also suggested requiring law enforcement to use other means to corroborate a facial-recognition match before taking action against someone. We strongly hope Congress, state lawmakers, and police departments will consider these and other limits for facial recognition surveillance. Our Facial Recognition Task Force, comprised of civil rights and civil liberties advocates, law enforcement, tech experts, and academics, is presently examining these vexing questions and will issue a report with recommendations in early 2019.

Related Content

New Appointee to Lead Intelligence Department Is a Threat to Our Security

Lawmakers need to close the data broker loophole to prevent unaccountable, inexperienced government officials from targeting Americans